The Dress That Broke the Internet

A Sandbox Experiment in Internal Rendering and the Illusion of Shared Reality

The Observation

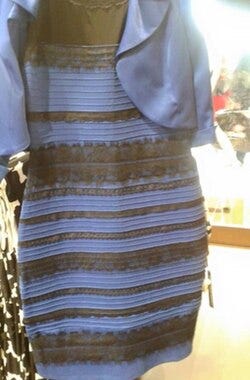

Look at the image below. Do not analyze it. Do not try to remember what the internet told you a decade ago. Just look at it right now, in your current environment, with your current eyes.

What color is this dress?

If you see White and Gold, your hardware is telling you one story. If you see Blue and Black, it’s telling you another.

The Objective Fact: The pixels in that image are exactly the same for every person reading this. Yet, we are each experiencing a fundamentally different reality.

The Sandbox Experiment

Welcome to the first session in The Reality Sandbox.

In 2015, this image caused a global system crash. It wasn’t just a “meme”; it was the accidental exposure of a biological secret. It proved that “Reality” isn’t a single, objective broadcast that we all tune into.

Reality is an Internal Render.

Your brain is not a window; it is a high-speed graphics engine. It takes raw, messy data (photons hitting your retina) and, before you ever “see” it, it runs that data through a complex set of pre-installed algorithms to render a world that is navigable and meaningful.

Why the Hardware is Glitching

This specific photo is a “perfect storm” for your biological hardware because the lighting source is completely ambiguous.

Because your brain doesn’t know where the light is coming from, it is forced to perform a calculation called Color Constancy. It essentially asks: “What color is the light in this room? I need to subtract that ‘pollution’ so I can show the user the ‘true’ color of the dress.”

The Shadow Render: If your brain assumes the dress is in a cool shadow, it subtracts the blue tones and projects White and Gold.

The Artificial Render: If your brain assumes the dress is under warm artificial light, it subtracts the yellow tones and projects Blue and Black.

Data Collection (Your Input Needed)

To move forward with our diagnostic, we need to see the current calibration of our research group.

What does your hardware project?

The Global Baseline

Now that you’ve logged your data, how do you compare to the global population?

Statistically, you aren’t alone in your version of the truth, but you are part of a divided population. In global studies of this specific “glitch,” the hardware split is roughly:

57% Blue and Black

30% White and Gold

13% Fluctuating or Mixed

Even within those groups, the vibrancy of the gold or the depth of the blue is tailored specifically to the viewer. We are looking at one image, but we are inhabiting thousands of different worlds.

The Inside-Out Shift

Here is the most important takeaway of this session: The color you see is not on your screen. If you were to sample the pixels in a color picker, you would find they are actually a muddy, neutral tan and a muted violet-blue. The “Gold” or the “Deep Blue” you are experiencing is being projected from inside your own skull. Your hardware is filling in the blanks based on its own internal calibration.

You aren’t seeing the world as it is. You are seeing a version of the world that your hardware renders from within.

Coming Up Next...

Why did your brain choose the specific “White Balance” it did? Why does your spouse see the exact opposite?

Tomorrow, we move from the Sandbox to the Hardware Diagnostic phase. I’m going to show you how your lifestyle—when you wake up, where you work, and your “Lark vs. Owl” profile—has recalibrated your firmware to see a different reality than the person sitting next to you.

Stand by for tomorrow’s Diagnostic.