Hardware Diagnostic: The Circadian Calibration

Why your internal engine "glitched" on a viral dress, and what it reveals about the world you've rendered.

The Recap

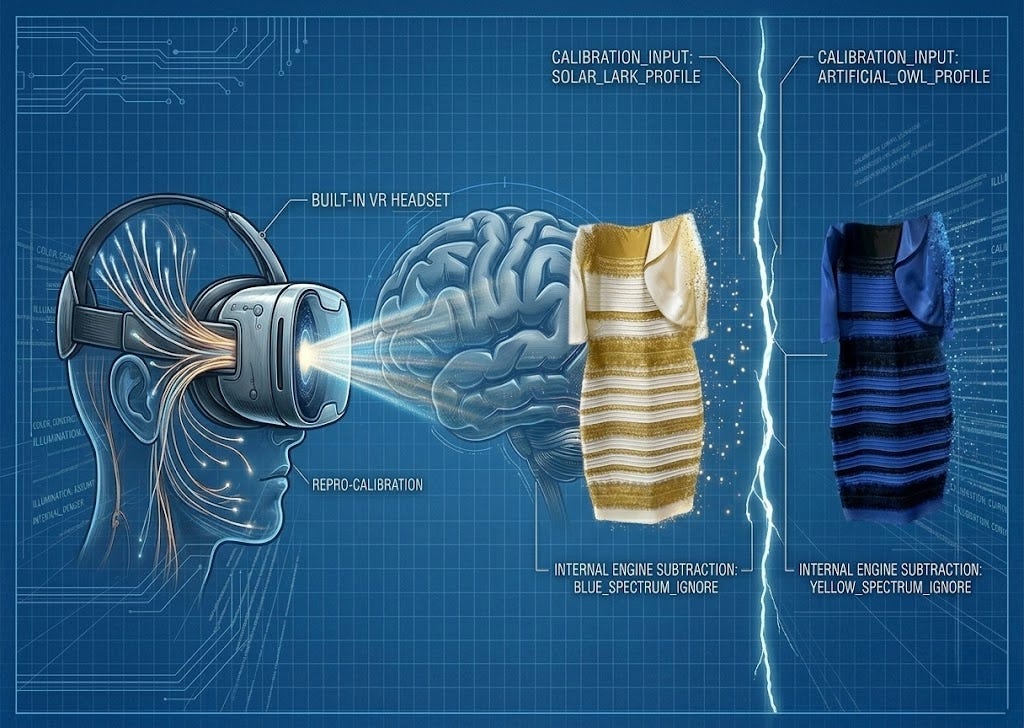

In yesterday’s Sandbox Experiment, we discovered a glitch. We proved that for 30% of you, your hardware is subtracting blue light to project a White and Gold dress, while 57% are subtracting yellow light to project a Blue and Black dress.

The question isn’t what you saw. The question is why your internal engine chose that specific subtraction.

The Mechanism: The Light You Ignore

Research suggests your brain’s color-constancy algorithm is calibrated by the light you’ve spent your life “ignoring.”

The Lark (Natural Light): If you spend your life under the blue-heavy spectrum of the sun, your brain assumes blue is “pollution” and subtracts it.

The Owl (Artificial Light): If you live under the yellow-heavy spectrum of LEDs and bulbs, your brain assumes yellow is the “pollution” and filters it out.

But the “Lark vs. Owl” data is just the technical spec. The real revelation is much more profound.

Your Built-in VR Headset

As we noted in the Project Manual, you have never seen the “real” world. From the moment you were born, you have been wearing a built-in biological VR headset—a high-fidelity, haptic, 360-degree interface that sits between you and objective reality.

When you look at the dress, you aren’t seeing a photo. You are seeing a render—a corrected, polished version of reality generated by your headset’s internal GPU.

Your brain looked at the messy, ambiguous data of that image, reached into its own history of light and shadow, and made an executive decision. It didn’t ask your permission. It didn’t present you with options. It simply projected a reality and handed it to you as “The Truth.”

The Hardware Fact: The “Gold” or “Deep Blue” you see is a ghost created by your hardware. It exists only inside the headset.

The Great Divide

The reason this “glitch” feels so personal—and why people argue so heatedly about it—is because it threatens our most basic assumption: That if we are standing in the same room, we are seeing the same thing.

We aren’t.

We are each inhabiting a personal simulation tailored specifically to our individual sensors. Two people can stand side-by-side, look at the same object, and inhabit two entirely different visual universes.

The color isn’t “out there.” It’s being projected from the inside out. You aren’t just looking at a different version of the world; you are creating the world you see through the lens of your own internal calibration.

The Expansion: Beyond the Spectrum

Once you accept that color is an “inside job,” the floor starts to fall away. If your biological VR headset is capable of inventing a color that isn’t there—simply to create a world that “makes sense”—what else is it rendering?

Think about the texture of the chair you’re sitting in, the specific timbre of a distant siren, or the smell of rain on hot pavement. Are these objective qualities of the universe, or are they just user-interface icons created by your hardware to help you navigate a reality you can’t actually perceive?

But the rabbit hole goes deeper.

What about the emotions that flood your system? The sharp spike of anxiety at a sudden noise, the warmth of nostalgia, or the heavy weight of grief. Are these “reactions” to the outside world, or are they just more complex renderings? Is “anger” just another filter your headset applies to raw data, much like it projects “Gold” where only a colorless, muddy-brown pixel exists?

If your hardware can’t be trusted to show you the true color of a dress, why should it be trusted to show you the “truth” of your own life?

We are not just experiencing reality; we are hallucinating a world into existence, one render at a time. The question isn’t whether you’re seeing the dress correctly—it’s whether you’ve ever truly seen the world at all, or just the beautiful, incredibly useful hallucination your hardware has created for you.

Next Week’s Experiment:

If your hardware can invent a color, can it invent a person? Next week, we test the "Object Recognition" function of your headset to see why two people can look at the exact same face and see two entirely different lives.